Original presentation:

http://www.infoq.com/presentations/Evolving-Programming-for-the-CloudUniversal Scalability Law:

Throughput limited by two factors:

1. contention - bottleneck on shared resources2. coherency - communication and coordination among multiple nodes

Programing in the cloud:

Parallelismrouting requests

aggregating services

queuing work

Async I/O and Futures

workload/data/processing partition

pipelines, map-reduce

Layering

strict layered system

common code in frameworks

-- configuration

-- instrumentation

-- Failure management

Services

composition

dependency

addressing

persistence

deployment

State management

Stateless instances

Durable state in persistent storage

Data model

Distributed key-value stores

Constrained by design (no ER, limited schema)

plan to shard

plan to de-normalize

- optimize for reads

- pre-calculate joins/aggregations/sorts

- use async queue to update

- tolerate transient inconsistency

Failure handling

Expect failures and handle them

- Graceful degradation

- timeouts and retries

- throttling and back-off

Testing

Test early and often (Test-driven and Test-first)

Test end-to-end

Operation in the cloud:

DevOps mindset- automate, automate, automate

- If you do it more than twice, script it

Configuration Injection

- Inject at boot time and run-time

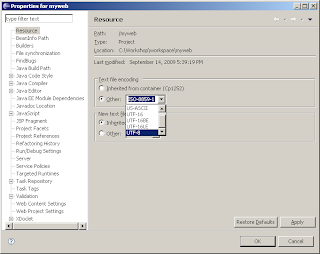

- Separate code and configuration

Instrumentation

- Fully instrument all components

- Remotely attach/profile/debug components

- Logging is insufficient

- Use a framework

Monitoring

- monitor requests end-to-end

- monitor activity and performance

- metrics, metrics, metrics

- understand your typical system behavior

Metering

- Variable cost

- Efficiency matters (inefficiency multiplied by many instances)